Happy year of the Fire Horse. May it cleanse the world. 🔥🐎

And we’re back! This week I am joined by Blackjack Hall of Fame member Richard Munchkin to discuss Ken Uston’s messy and outrageous debut book, The Big Player. For most of the 1980s Ken Uston was a celebrity for beating casinos at blackjack. He wrote countless books, his picture adorned magazine covers and video game boxes, he appeared on national television and hung out with stars. And The Big Player was the book that started it all. Munchkin tells me about what it was like to deal to Uston, what the blackjack faithful really thought of him, and how people still make a living playing blackjack today. Presented by InGame.com.

Buy The Big Player by Ken Uston

Buy Gambling Wizards by Richard Munchkin

More books by Huntington Press Publishing

Download audio: https://api.substack.com/feed/podcast/174066684/b920c7992982c40e07a8580c248f6082.mp3

Read more of this story at Slashdot.

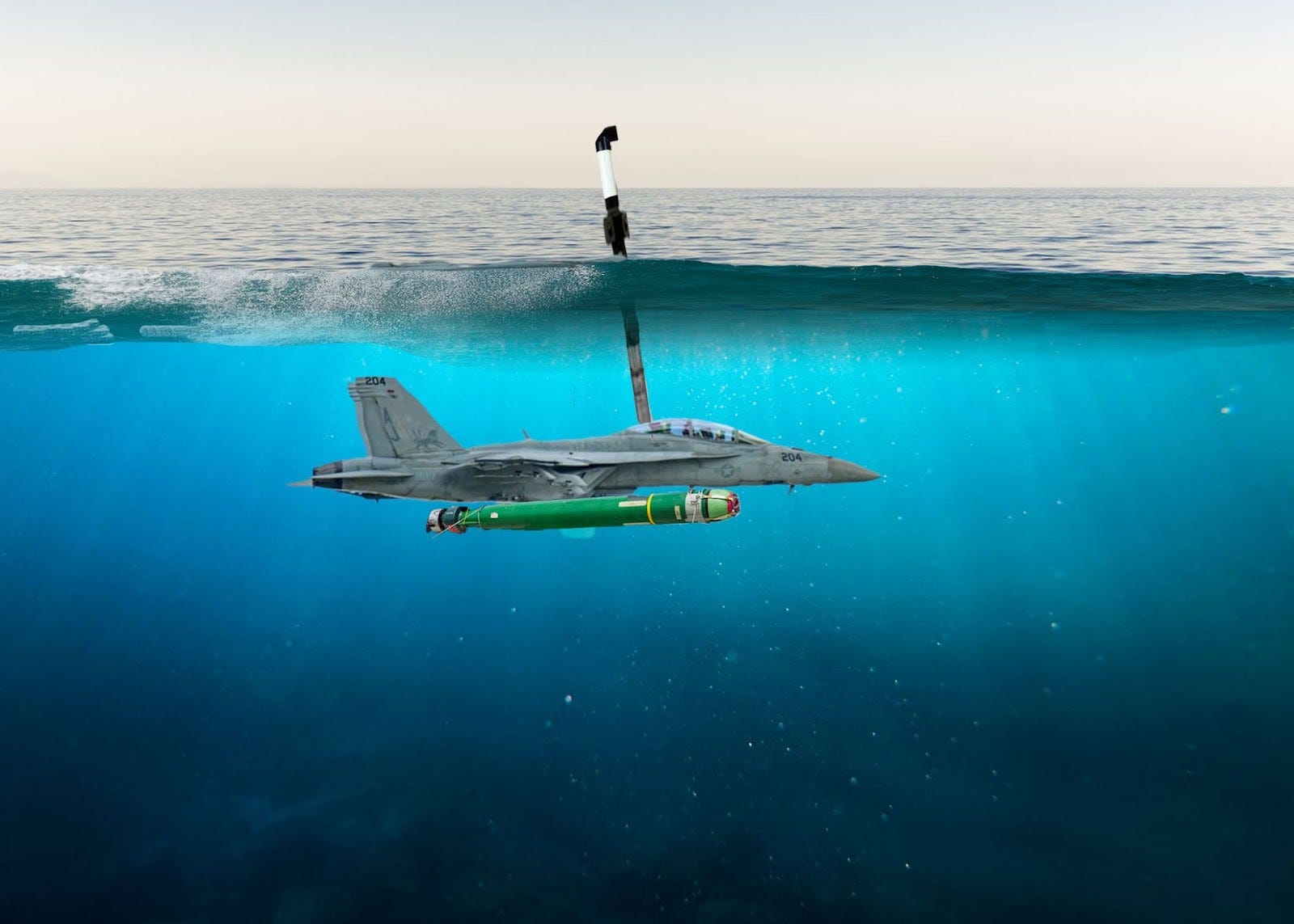

NAVAL STATION NORFOLK, Va. — After months of losing multi-million-dollar aircraft to the ocean — a known enemy of aviation — the U.S. Navy has completed retrofitting its F/A-18 Super Hornets to operate beneath the sea.

“As of this week, Navy pilots can fly, float, or flail in any environment,” said a visibly relieved Adm. James W. Kilby, Vice Chief of Naval Operations.

“We’ve waterproofed all our operational fleet of F/A-18 Super Hornets, added periscopes, and fitted them with antiship and antisubmarine munitions. So regardless of whatever environment our aircraft wind up in, they should be good to go.”

The upgraded jets, dubbed the F/A-18 Salmon, can achieve a speed of Mach 1.6 in the air, and “up to 4 knots per hour when heading upstream” while submerged, Kilby said.

The overhaul follows a string of embarrassing incidents for the Navy, which has lost three F/A-18s in the Red Sea due to various mishaps and accidents that unintentionally caused the $67 million fighters to join the service’s undersea fleet. While the Navy indicated that a high operational tempo may be responsible, others in the Pentagon feared that America’s enemies were exploiting an inherent vulnerability: most, if not all, aircraft were incapable of operating underwater.